this post was submitted on 30 Jun 2024

572 points (98.1% liked)

Programmer Humor

20188 readers

1660 users here now

Welcome to Programmer Humor!

This is a place where you can post jokes, memes, humor, etc. related to programming!

For sharing awful code theres also Programming Horror.

Rules

- Keep content in english

- No advertisements

- Posts must be related to programming or programmer topics

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

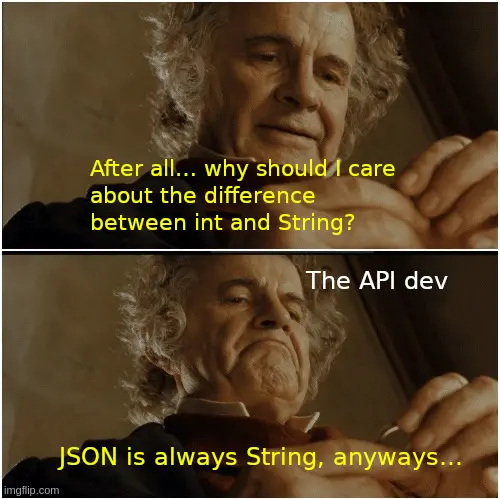

The point is that everything is expressable as JSON numbers, it's when those numbers are read by JS there's an issue

Can you give a specific example? Might help me understand your point.

I am not sure what could be the example, my point was that the spec and the RFC are very abstract and never mention any limitations on the number content. Of course the implementations in the language will be more limited than that, and if limitations are different, it will create dissimilar experience for the user, like this: Why does JSON.parse corrupt large numbers and how to solve this

This is what I was getting at here https://programming.dev/comment/10849419 (although I had a typo and said big instead of bug). The problem is with the parser in those circumstances, not the serialization format or language.

I disagree a bit in that the schema often doesn't specify limits and operates in JSON standard's terms, it will say that you should get/send a number, but will not usually say at what point will it break.

This is the opposite of what C language does, being so specific that it is not even turing complete (in a theoretical sense, it is practically)

Then the problem is the schema being under specified. Take the classic pet store example. It says that the I'd is int64. https://petstore3.swagger.io/#/store/placeOrder

If some API is so underspecified that it just says "number" then I'd say the schema is wrong. If your JSON parser has no way of passing numbers as arbitrary length number types (like BigDecimal in Java) then that's a problem with your parser.

I don't think the truly truly extreme edge case of things like C not technically being able to simulate a truly infinite tape in a Turing machine is the sort of thing we need to worry about. I'm sure if the JSON object you're parsing is some astronomically large series of nested objects that specifications might begin to fall apart too (things like the maximum amount of memory any specific processor can have being a finite amount), but that doesn't mean the format is wrong.

And simply choosing to "use string instead" won't solve any of these crazy hypotheticals.

Underspecified schema is indeed a problem, but I find it too common to just shrug it off

Also, you're very right that just using strings will not improve the situation 🤝