45 to 55 watt.

But I make use of it for backup and firewall. No cloud shit.

A place to share alternatives to popular online services that can be self-hosted without giving up privacy or locking you into a service you don't control.

Rules:

Be civil: we're here to support and learn from one another. Insults won't be tolerated. Flame wars are frowned upon.

No spam posting.

Posts have to be centered around self-hosting. There are other communities for discussing hardware or home computing. If it's not obvious why your post topic revolves around selfhosting, please include details to make it clear.

Don't duplicate the full text of your blog or github here. Just post the link for folks to click.

Submission headline should match the article title (don’t cherry-pick information from the title to fit your agenda).

No trolling.

Resources:

Any issues on the community? Report it using the report flag.

Questions? DM the mods!

45 to 55 watt.

But I make use of it for backup and firewall. No cloud shit.

Between 50W (idle) and 140W (max load). Most of the time it is about 60W.

So about 1.5kWh per day, or 45kWh per month. I pay 0,22€ per kWh (France, 100% renewable energy) so about 9-10€ per month.

Are you including nuclear power in renewable or is that a particular provider who claims net 100% renewable?

Net 100% renewable, no nuclear. I can even choose where it comes from (in my case, a wind farm in northwest France). Of course, not all of my electricity come from there at all time, but I have the guaranty that renewable energy bounds equivalent to my consumption will be bought from there, so it is basically the same.

Thanks. I buy Vattenfall but make net 2/3rds of my own power via rooftop solar.

Mate, kWh is a measure of electricity volume, like gallons is to liquid. Also, 100 watt hours would be a much more sensical way to say the same thing. What you've said in the title is like saying your server uses 1 gallon of water. It's meaningless without a unit of time. Watts is a measure of current flow (pun intended), similar to a measurement like gallons per minute.

For example, if your server uses 100 watts for an hour it has used 100 watt hours of electricity. If your server uses 100 watts for 100 hours it has used 10000 watts of electricity, aka 10kwh.

My NAS uses about 60 watts at idle, and near 100w when it's working on something. I use an old laptop for a plex server, it probably uses like 50 watts at idle and like 150 or 200 when streaming a 4k movie, I haven't checked tbh. I did just acquire a BEEFY network switch that's going to use 120 watts 24/7 though, so that'll hurt the pocket book for sure. Soon all of my servers should be in the same place, with that network switch, so I'll know exactly how much power it's using.

I came here to tell my tiny Raspberry pi 4 consumes ~10 watt, But then after noticing the home server setup of some people and the associated power consumption, I feel like a child in a crowd of adults 😀

I'm using an old laptop with the lid closed. Uses 10w.

All in, including my router, switches, modem, laptop, and NAS, I'm using 50watts +/- 5.

It does everything I need, and I feel like that's pretty efficient.

Quite the opposite. Look at what they need to get a fraction of what you do.

Or use the old quote, "they're compensating for small pp"

I have an old desktop downclocked that pulls ~100W that I'm using as a file server, but I'm working on moving most of my services over to an Intel NUC that pulls ~15W. Nothing wrong with being power efficient.

we're in the same boat, but it does the job and stays under 45°C even under load, so I'm not complaining

Is there a (Linux) command I can run to check my power consumption?

If you have a server with out-of-band/lights-out management such as iDRAC (Dell), iLO (HPe), IPMI (generic, Supermicro, and others) or equivalent, those can measure the server's power draw at both PSUs and total.

Get a Kill-a-Watt meter.

Or smart sockets. I got multiple of them (ZigBee ones), they are precise enough for most uses.

If you have a laptop/something that runs off a battery, upower

My server uses about 6-7 kWh a day, but its a dual CPU Xeon running quite a few dockers. Probably the thing that keeps it busiest is being a file server for our family and a Plex server for my extended family (So a lot of the CPU usage is likely transcodes).

With everything on, 100W but I don't have my NAS on all the time and in that case I pull only 13W since my server is a laptop

kWh is a unit of energy, not power

I was really confused by that and that the decided units weren't just in W (0.1 kW is pretty weird even)

Wh shouldn't even exist tbh, we should use Joules, less confusing

Watt hours makes sense to me. A watt hour is just a watt draw that runs for an hour, it's right in the name.

Maybe you've just whooooshed me or something, I've never looked into Joules or why they're better/worse.

Joules (J) are the official unit of energy. 1W=1J/s. That means 1Wh=3600J or that 1J is kinda like "1 Watt second". You're right that Wh is easier since everything is rated in Watts and it would be insane to measure energy consumption by seconds. Imagine getting your electric bill and it says you've used 3,157,200,000J.

3,157,200,000J

Or just 3.1572GJ.

Which apparently is how this Canadian natural gas company bills its customers: https://www.fortisbc.com/about-us/facilities-operations-and-energy-information/how-gas-is-measured

I guess it wouldn't make sense to measure energy used by gas-powered appliances in Wh since they're not rated in Watts. Still, measuring volume and then converting to energy seems unnecessarily complicated.

Thanks for the explainer, that makes a lot of sense.

Idles at around 24W. It’s amazing that your server only needs .1kWh once and keeps on working. You should get some physicists to take a look at it, you might just have found perpetual motion.

80-110W

Mine runs at about 120 watts per hour.

Please. Watt is an SI unit of power, equivalent of Joule per second. Watt-hour is a non-SI unit of energy( 1Wh = 3600 J). Learn the difference and use it correctly.

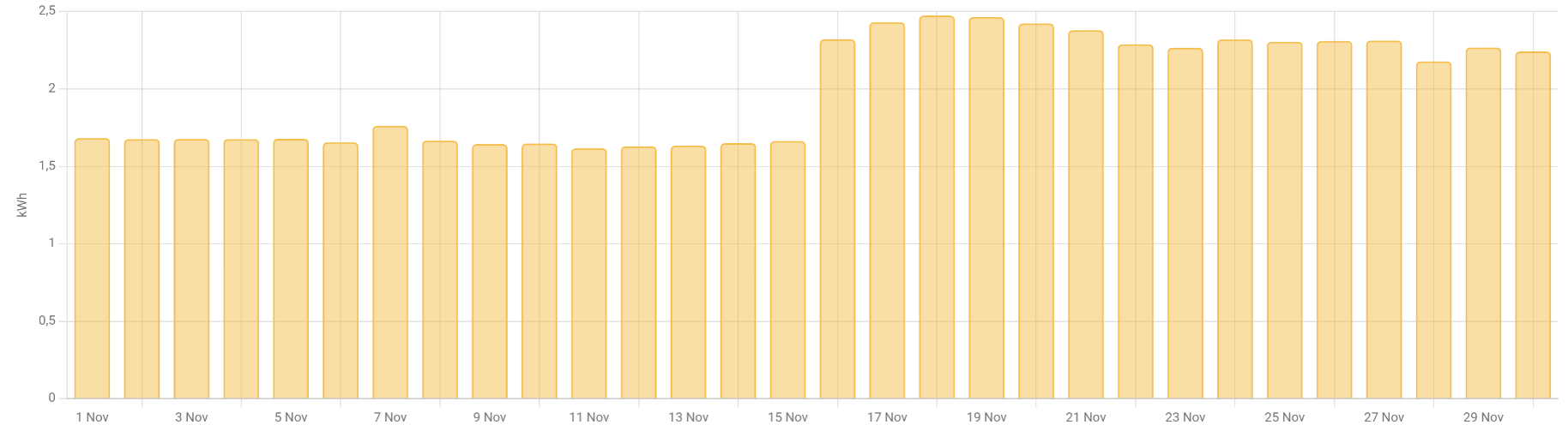

For the whole month of November. 60kWh. This is for all my servers and network equipment. On average, it draws around 90 watt.

You might have your units confused.

0.1kWh over how much time? Per day? Per hour? Per week?

Watthours refer to total power used to do something, from a starting point to an ending point. It makes no sense to say that a device needs a certain amount of Wh, unless you're talking about something like charging a battery to full.

Power being used by a device, (like a computer) is just watts.

Think of the difference between speed and distance. Watts is how fast power is being used, watt-hours is how much has been used, or will be used.

If you have a 500 watt PC, for example, it uses 500Wh, per hour. Or 12kWh in a day.