this post was submitted on 10 Apr 2024

437 points (100.0% liked)

Technology

37739 readers

651 users here now

A nice place to discuss rumors, happenings, innovations, and challenges in the technology sphere. We also welcome discussions on the intersections of technology and society. If it’s technological news or discussion of technology, it probably belongs here.

Remember the overriding ethos on Beehaw: Be(e) Nice. Each user you encounter here is a person, and should be treated with kindness (even if they’re wrong, or use a Linux distro you don’t like). Personal attacks will not be tolerated.

Subcommunities on Beehaw:

This community's icon was made by Aaron Schneider, under the CC-BY-NC-SA 4.0 license.

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

LMs aren't thinking, aren't inventing, they are predicting what is supposed to be answered next, so it's expected that they will produce the same results every time

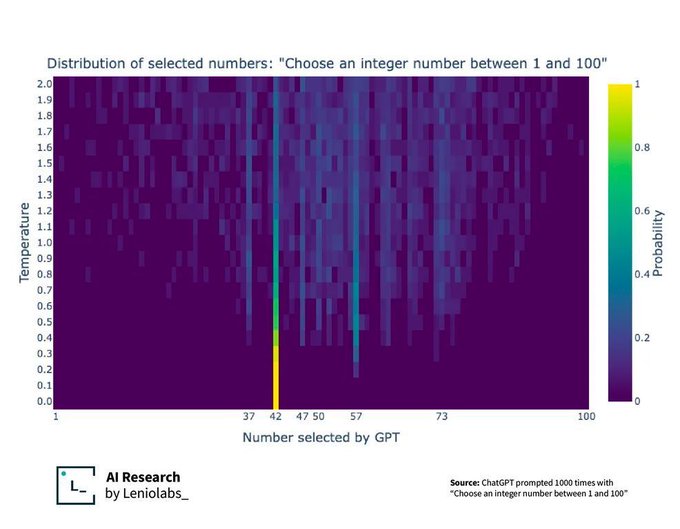

This graph actually shows a little more about what's happening with the randomness or "temperature" of the LLM.

It's actually predicting the probability of every word (token) it knows of coming next, all at once.

The temperature then says how random it should be when picking from that list of probable next words. A temperature of 0 means it always picks the most likely next word, which in this case ends up being 42.

As the temperature increases, it gets more random (but you can see it still isn't a perfect random distribution with a higher temperature value)

Except it clearly doesn't produce the same result every time. You're not making a good case for whatever you're trying to say.

They add some fuzziness to it so it doesn't give the exact same result. Say one gets a score of 90, another 85, and other 80. The 90 will be picked more often, but they sometimes let it pick the 85, or even the 80. It's perfectly expected, and you can see that result here with 42 being very common, but then a few others being fairly common, and most being extremely uncommon.